Key results

How to write great key results

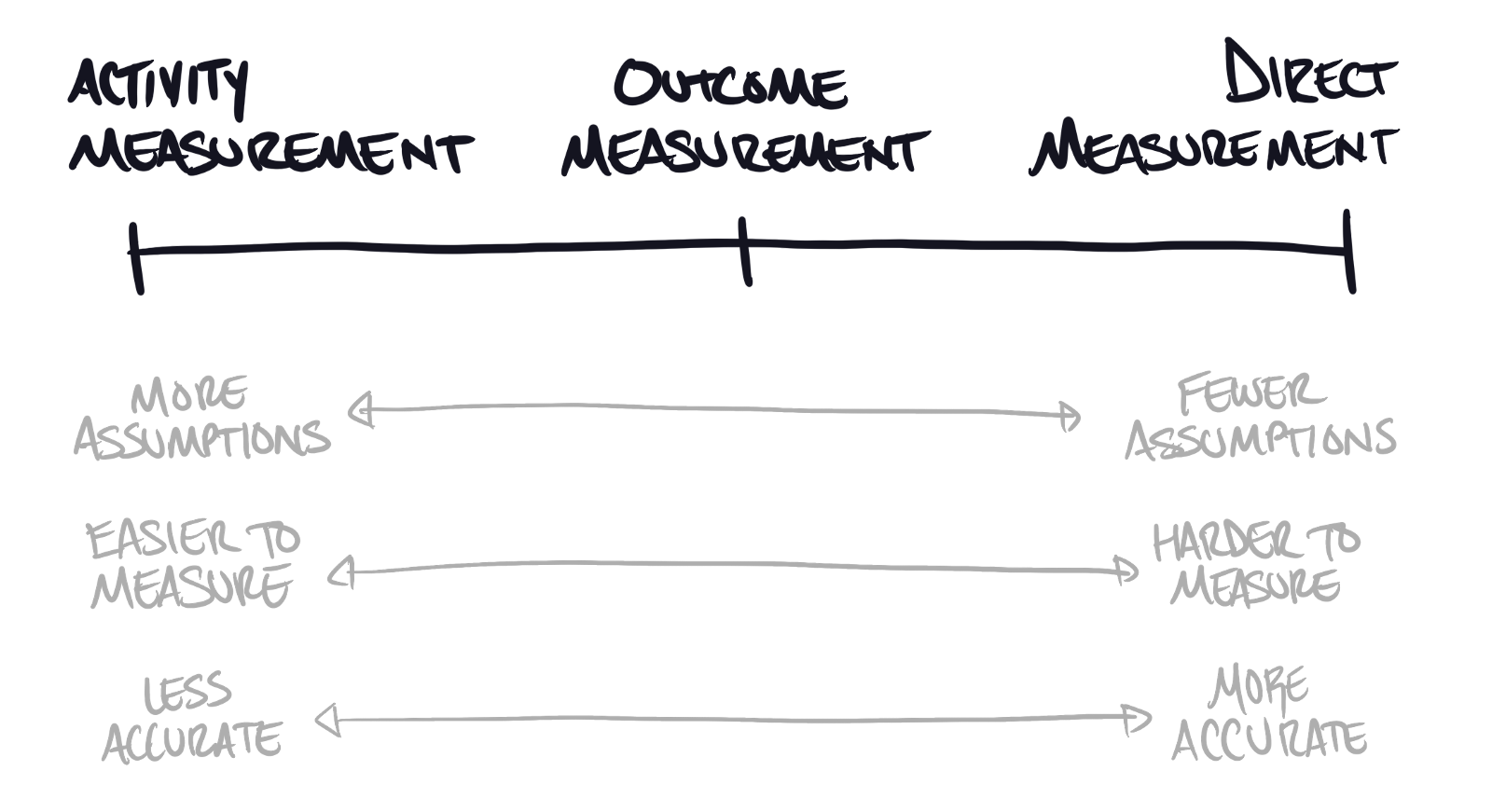

Key results tell us whether we’re making progress toward our objectives. They seem straightforward, but writing great ones requires navigating a fundamental tension between what we want to measure and what we can actually measure.

The perfect key result (that doesn’t exist)

The ideal key result would directly measure your objective. If your goal is to “Make our website more resilient,” the perfect metric would be something like “Website Resiliency Score” that captures exactly what you’re trying to achieve. Picture a clean dashboard where resilience gets a number, and your key result becomes “Increase Website Resiliency from 12 to 17.”

That would be elegant. Clear. Unambiguous.

It’s also impossible.

Site resiliency, like most meaningful business objectives, resists direct measurement. It’s multidimensional, nuanced, and inherently complex. You might as well try to measure “engagement” or “culture” or “innovation” with a single number. These concepts matter enormously, but they’re too big and too rich to fit into a simple metric.

Direct measurement works beautifully when it works at all, which is almost never.

Instead, we need proxies.

Outcome-based key results: The sweet spot

When you can’t measure something directly, look for what changes around it. Think about what you’d observe as your objective improves. What downstream effects would you notice?

Take our website resilience example. As resilience improves, you might see fewer unplanned outages, faster recovery from incidents, or better geographic distribution of your infrastructure. You can’t directly measure “resilience,” but you can track these surrounding indicators that echo resilient behavior.

This is the art of outcome-based measurement. You’re identifying the constellation of metrics that orbit your true objective. These proxies give you concrete numbers while preserving enough abstraction to maintain flexibility in how you achieve your goal.

The strength of outcome-based key results lies in their balance. They’re specific enough to be measurable yet broad enough to avoid prescribing exactly how you’ll succeed. A team focused on reducing unplanned downtime has multiple paths to victory, from better monitoring to more robust deployment practices to improved architecture.

But this approach demands something from you: assumptions.

When you measure unplanned downtime as a proxy for resilience, you’re assuming that downtime reflects the underlying health you care about. You’re betting that improving one will improve the other. Sometimes this assumption holds beautifully. Sometimes it doesn’t.

Many teams have achieved their key results while failing their objectives entirely, usually because their assumptions proved wrong. The numbers went up and to the right, but the underlying reality remained unchanged. This isn’t a failure of measurement so much as a failure of thinking clearly about what the measurements represent.

There’s another challenge with outcome-based metrics: they often require infrastructure you don’t have. If you’re building a new product, you might not yet have the monitoring in place to track downtime or recovery speed. The metrics make perfect sense in theory but remain out of reach in practice.

When outcome-based measurement isn’t feasible, you’re left with one option.

Activity-based key results: Your last resort

If you can’t measure outcomes, you can always measure actions. You can track the behaviors that should lead to better outcomes, even when those outcomes themselves remain opaque.

Continuing our resilience example: if measuring uptime is impossible, you could track the number of automated tests running before each deployment. It’s not as clean as tracking actual downtime, but it’s a concrete action that should contribute to system reliability.

Activity-based metrics require even more assumptions than outcome-based ones. You’re betting that automated testing improves uptime, and that improved uptime reflects better resilience. Each additional layer of assumption increases the risk that your efforts miss the mark.

The more insidious risk is being right, but only weakly so. Let’s say automated testing does improve your uptime, and better uptime does reflect improved resilience. Congratulations—you’ve succeeded. But what if testing was only a small part of the resilience puzzle? What if your database architecture or network topology posed bigger risks?

Activity-based key results can lock you into solving problems in specific ways. They trade flexibility for clarity, precision for certainty. Sometimes this trade-off makes sense, particularly when you’re resource-constrained or operating in unfamiliar territory. But it’s a trade-off worth acknowledging.

Find where you fit

Putting it all together

The question “What makes a key result good?” resists simple answers because context matters enormously. A metric that works perfectly for one team might be completely wrong for another. The key is finding the right balance between precision and practicality, between what you want to measure and what you can actually track.

Start by attempting direct measurement. It rarely works, but when it does, the clarity is unmatched. When direct measurement proves impossible—which it usually will—look for outcome-based proxies. Find the metrics that change as your objective improves, and make sure you understand the assumptions connecting them to what you really care about.

If outcome-based measurement remains out of reach, fall back to activity-based metrics. Measure the behaviors that should drive the outcomes you want. But stay honest about the assumptions you’re making, and keep looking for opportunities to measure closer to your true objective.

The most dangerous key results are the ones that feel scientific but rest on shaky foundations. Better to acknowledge the limitations of your measurement than to mistake activity for achievement.

Writing great key results is difficult precisely because it forces you to think clearly about what you’re really trying to accomplish. But that’s also what makes it valuable. The process of moving from vague objectives to concrete measurements reveals gaps in your thinking and exposes assumptions you didn’t know you were making.

The goal isn’t perfect measurement—it’s thoughtful measurement that keeps you oriented toward what actually matters.

If you have any questions, comments, or thoughts please reach out. I’m always happy to chat.